Facebook is cleaning up its content – but not for the sake of its advertisers

Facebook has revealed the extent of its changes to content moderation. Yet despite qualms regarding overzealous censorship, advertisers are largely unmoved by the news – for once, it was not for their benefit.

The release of Facebook’s latest Community Standards Enforcement Report comes off the back of unyielding questions over brand safety on the platform, most recently sparked by the role Facebook Live played in the broadcast of the Christchurch massacre, as well as calls to break up the company in the name of fairness and regulation.

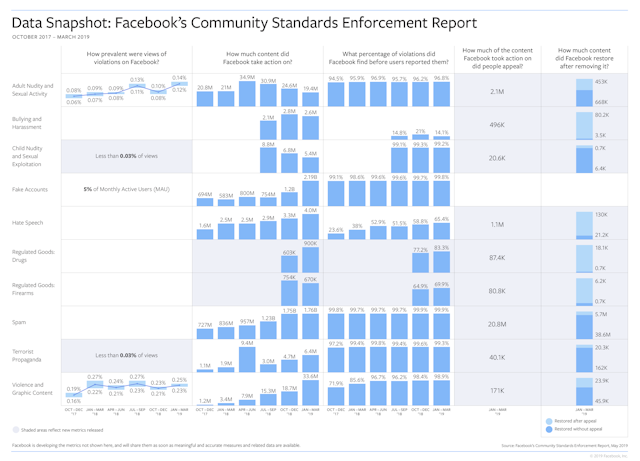

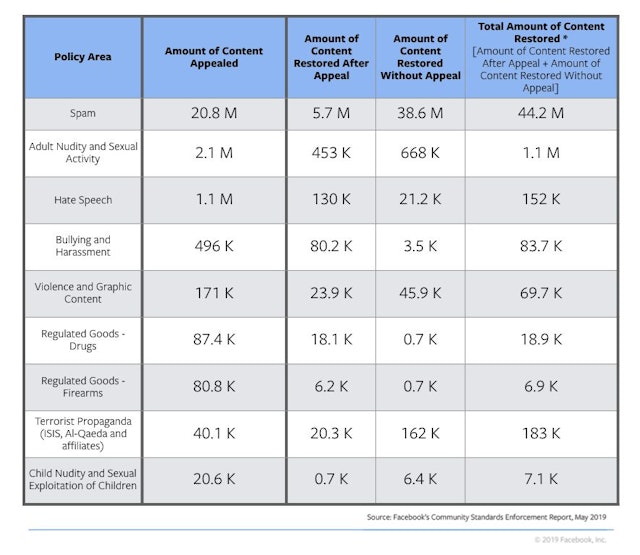

The report outlined the work Facebook's outsourced content moderators had done to remove the influence of bad actors on the platform. This included the deletion of 3.4bn fake accounts and 7.3m hate speech posts between October 2018 and March 2019.

The platform today (23 May) vowed to be more transparent in the publishing of such content moderation updates and figures, including the recurrence of posts involving child nudity, sexual exploitation, terrorist propaganda, illegal drugs and firearms. It also reiterated plans to increase the pay of its moderators and offer them access to counseling at all times of the day.

On a conference call with press today, founder and chief executive Mark Zuckerberg stressed the investment Facebook had made to clean up content on its platforms at the expense of business growth.

“I think the amount of our budget that goes towards our safety systems is greater than Twitter's whole revenue this year,” he said. “It certainly has a big impact on profitability ... we've shifted some of our best people and best resources onto addressing these major social issues.”

Zuckerberg said he did not believe the report would have a negative impact on the company’s ad business, noting that “if anything I'd expect it to be helpful on the business side”, given the theoretical enhancements to brand safety.

While the changes are unlikely to result in a measurable lift to the platform’s bottom line, Zuckerberg is likely to be proved correct.

One high-level media planner told The Drum they were marginally concerned the doubling down of moderation would result in erroneous deletion of brand content, particularly those looking to “authentically engage in relevant cultural and entertainment moments [that] are flagged as inappropriate”. However, they added Facebook’s commitment to tighten up their standards as “a good thing that would eventually make the platform a safe space for all”.

Figures suggest advertisers would have likely continued to pump money into Facebook, regardless of this report or any quieter attempts it might have made to remove malevolent content and bad actors from the platform. It reported a 16% year-on-year increase in average revenue per user in its Q1 earnings call in April, while daily active user figures remain buoyant despite calls to #BoycottFacebook after the Cambridge Analytica debacle.

The company’s recent pivot to privacy via changes to its user interface has also been designed to stem any drop off in user numbers. And while there were incidents of brands publicly dropping off the platform throughout its annus horribilis, these were anecdotal, and – for the most part – temporary.

“Advertisers largely seem to have priced in temporary outrage as a cost of doing business with platforms: in the past year we've seen several protests and theatrical puling of ad spend only to have it quietly return to earlier levels within mere weeks of the most recent offending event,” said Ana Milicevic, principal and co-founder of management consultancy Sparrow Advisers.

“But there's always a higher risk when advertisers are in poorly moderated UGC environments – most larger brands have other options, so what they're effectively telling the market is that content quality isn't their top consideration.”

Marketing consultant Katie Martell said that while quality content is an issue for advertisers, the reality is that “numbers talk”.

“For now, Facebook and Instagram still have two of the most engaged user bases we have to super-target ads to,” she said. “Measures like this announcement today are attempts to safeguard against the lack of trust and user abandonment tomorrow may bring. But for now, it’s only an issue if audience size and engagement drop.”

“Quite honestly,” said Gartner analyst Andrew Frank, “I don't know how much advertisers are tuned into this sort of level of scrutiny over Facebook. There's probably going to be some people in some situations who are persuaded by what they have said, but it seems like it will be drowned out by so much other news that I wouldn't expect it to have a very pronounced effect.”

That other news is what Facebook is likely to be actually worried about. In its 15-year history, it has proven itself to be (in Frank’s words) “irresistible” to advertisers: now it is facing scrutiny from governments across the world and numerous accusations of monopolization and undue power.

Today’s announcements allowed Zuckerberg to twist such charges in his favor.

On the press call, the founder attempted to downplay Facebook’s dominance in the global digital ad space, stating: “I think arguments that we're in some sort of dominant position there might be a little stretched” as part of an argument against breaking up his so-called monopoly.

“If the problems you're most worried about are harmful content, and election interference [...] those are to me are the areas that are the most important social issues and I don't think the remedy of breaking up the company is going to address those,” said Zuckerberg. “It's going to make it a lot harder."

He has reason to fight back, according to Brian Wieser, GroupM’s global president of business intelligence.

The former Pivotal analyst noted that while the degree to which a bad content has impacted in user numbers or usage is “anything but clear, regulator and government efforts to curtail the company are only going to accelerate”.

“If they can mitigate some of that by being proactive, if there’s one thing they can point to that is clear, they might [avoid] not just the threat of regulation, but the threat of the removal of the regulation that has benefited them,” he said, adding that while today’s announcements may mitigate some advertiser risk, he is skeptical the platform will ever again get fully ahead of bad actors equipped with the same tools as its developers and moderators.

If a break up ever did occur, advertisers would be left with the same options are now: spend on one of Facebook’s products, spend on more than one of Facebook’s products or stop spending entirely. A disintegration may well detach the targeting synergies between platforms somewhat, but outside of the walled garden, it is unlikely that advertisers would be perturbed enough by this to meaningfully alter spend.

In the here and now, however, they are left with two choices: anger or prolonged apathy. Renee Murphy, principal analyst at Forrester, advocates for the former.

“I would caution brands that the real risk isn’t really about advertising or communicating with customers – it's the weaponization of the platform against you,” she said. “Brands face the same risks on this platform that governments and democracies do. What are they doing to protect themselves from the risk? The only real way to avoid that risk is to quit using the platform as a branding mechanism.”

Murphy added: “I have learned never to believe a word they say. They’re like that guy you dated they gave you nothing but backhanded compliments. You know, ‘you’d be prettier if you weren’t so fat’.

“They can keep patting themselves on the back all they want, but in the end, it will be a lack of ethics and the lack of understanding of their role in society that will lead people not to trust that platform. So much of what they do is a black box, including how effective the advertising is, and I think once engagement drops off, it’s all over.”

Content created with:

Meta

Our products empower more than 3 billion people around the world to share ideas, offer support and make a difference.

Find out more