How does mixed reality compare to VR? Hands on with the Microsoft HoloLens

Back in 2015 Microsoft took to the stage at its annual Build Developer Conference to showcase to the world a vision of the future it called ‘mixed reality’. It came in the form of a product that had already spent five years in development. It was a wearable device that scanned your physical surroundings and overlaid digital objects or ‘holograms’ that only the wearer could see.

A number of commercial possibilities were demonstrated when it launched, from its potential as a team collaboration/conferencing tool to its possible use as a 3D design tool, all the way through to its capability as a gaming device. What stood out was its ability for contextual and environmental understanding. And after a year of waiting, I was fortunate enough to have the chance to try HoloLens for myself.

The past

We have already been burnt and disappointed with promised head-mounted displays as a vision of the future, a prime example being Google Glass in 2013. However, although criticised for over-promising and under-performing in its features and capabilities, I do think it put an important stake in the ground for the world of augmented reality (AR) that had, until that point, only been experienced as an app on our mobiles.

When I got my hands on Google Glass as part of the developer programme back in 2014, I experimented by wearing it for a month in a wide-range of situations, as documented in "Living with Google Glass". The two key problems I discovered were cultural awkwardness when wearing the device in public places, and the device's ability to only recognise the surroundings, which was very much just notification board information, not true contextual understanding.

The HoloLens hands on

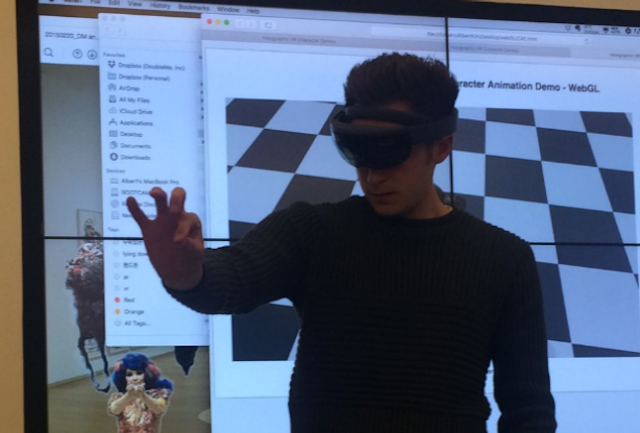

When I got the opportunity to finally try the HoloLens earlier this month I’ll admit I had my reservations but I was still excited about trying a device that might help shape the future of AR. I used the device to test out some of the out of the box demo apps, as well as some custom content produced by DoubleMe, a holographic VR company that digitally captures people's movements and then transforms them into VR as well as AR though mobile.

The device

Having tried a range of AR, VR and immersive experiences over the last couple of years, I was curious to see how the HoloLens might hold up in the increasingly competitive market.

The device itself looks like a cross between a motorcycle helmet with the top cut off, and a pair of skiing goggles with a transparent visor covering both eyes. It was a completely untethered experience with all the hardware self-contained, differing to most VR experiences to date that require a PC or console hook up to to power the tech.

It uses outward facing cameras that continually three-dimensionally scan the environment and surfaces around the wearer (basically two Kinect cameras). It feels a little heavier than your Oculus Rift or HTC Vive headset, and also features an inner band you can adjust for a more comfortable fit. Its main point of difference though is how you interact with it. You’re able to give voice commands, as you could with Google Glass, but you can also use hand gestures to navigate the device and interact as well, allowing you to move content around physical spaces and interact with experiences.

After being shown the basic gesture controls including pitch, rotate and bloom, I was surprised how naturally I slipped into using it and quickly forgot I was wearing the device. I started picking up digital characters and placing them on speakers in the office and sticking virtual displays and social feeds to the walls around me. The device's spacial memory and 3D understanding meant that instead of just being able to walk around 3D objects, I could step closer to see things in more detail, or even move around it to see it from another angle. It felt very much like the ‘Help me Obi Wan Kenobi' experience from the original Star Wars.

The device also allows you to pair HoloLenses together, allowing multiple users to experience the same content and collaborate on group projects. I got a chance to see a range of pre-render life-like holograms by DoubleMe as well as the alien shooter game from Microsoft that literally came out of the walls. After getting lost for 20 minutes or so in the gaming experience, I felt reassured that this was an exciting development towards the future of mixed reality experiences.

It's not quite there yet in terms of the finished product, my main concern being the device’s narrow field of vision that did somewhat detract from the overall experience. With only around 30 per cent of my vision covered by the display on this version, the magic was lost when looking at larger content, forcing me to step back to see the object or person in full.

Let’s not forget though, what I tried out was just the developer edition and not what the final product may look like or feel like to use, and it it did already feel quite refined.

The exciting future

With the device still only in development stage, it may be some time until we see what the final HoloLens will look like. Even at this stage though, I can see a huge range of potential commercial applications for the next generation of this device when it eventually comes to market at some point in 2017/18.

There have been some scary hyper reality visions of the future shared across the web, with our physical and digital worlds start to truly collide. From one afternoon’s testing, I can see the potential for it being a magical and open experience compared to its VR counterpart which can feel very singular and alienating.

Microsoft has the potential to really get it right with the HoloLens if it takes its time with the device, by listening to feedback. If it refines the already strong hardware with full peripheral vision, and explores how it can fit into more natural existing experiences, it will work.

Will Harvey is innovation lead at VCCP