Obfuscation and smart contracts: artists seek to prevent AI from stealing their work

As brands rush in to capitalize on image-generating AI models like Dall-E 2 and Midjourney, a growing number of critics have been speaking out against the tendency of these models to plagiarize the work of (human) artists. We explore this issue - including some potential solutions - as we continue AI to Web3: the Tech Takeover - the latest Deep Dive from The Drum.

Midjourney is one of three companies that was sued by a trio of artists earlier this year. / Adobe Stock

In a very short period of time, AI-generated artwork has exploded into popular culture.

Generative AI – a term that refers to a class of artificial intelligence models designed to produce images, text and other forms of content – has become the buzz-phrase du jour throughout much of the marketing world in recent months (you can read our complete guide to essential AI terms here). Inspired by the capabilities and rapid rise of models like ChatGPT and Midjourney, a cohort of big-name brands – from Coke to Instacart to Snapchat – have been rushing to stake their claims in the booming generative AI sector.

But in the glare of the current generative AI mania, it can be easy to overlook a dark spot: the negative impact the technology is having on some artists.

Advertisement

All AI and machine learning models are trained using vast quantities of data. In the case of image-based generative AI models such as Midjourney and Dall-E 2, much of that data is artwork gleaned from the internet. That means that when a user prompts one of these models to create, say, a “hyperrealistic, sci-fi, digital image of a robotic bird flying above a dystopian cityscape,” the model will duly get to work generating an “original” work of art that is potentially largely derived from the work of human artists.

What’s more, many generative AI models can create such an image in a matter of seconds, whereas a similar piece might require months of diligent labor from a human artist. And many of these AI models are completely free to use.

How can artists hope to compete with this new reality? And perhaps even more pressingly, is there anything that artists can do right now to protect their intellectual property – and their incomes – in the age of generative AI?

‘I thought, what’s the point of trying?’

Deb JJ Lee, a freelance illustrator who currently lives in Brooklyn, New York, remembers the moment they first discovered their work had been stolen by a machine. “A friend of mine reached out and said, ‘Hey, I noticed that someone is planting your work into a [generative AI] model and they’re not telling anybody who the artist was, but it was clearly yours,’” says Lee. “I looked at it and my stomach dropped … I can literally point out which piece was copied from which piece.”

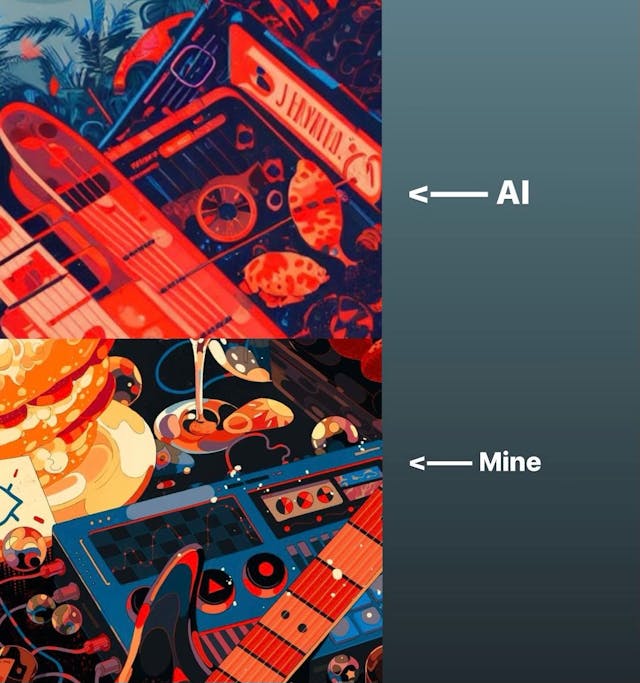

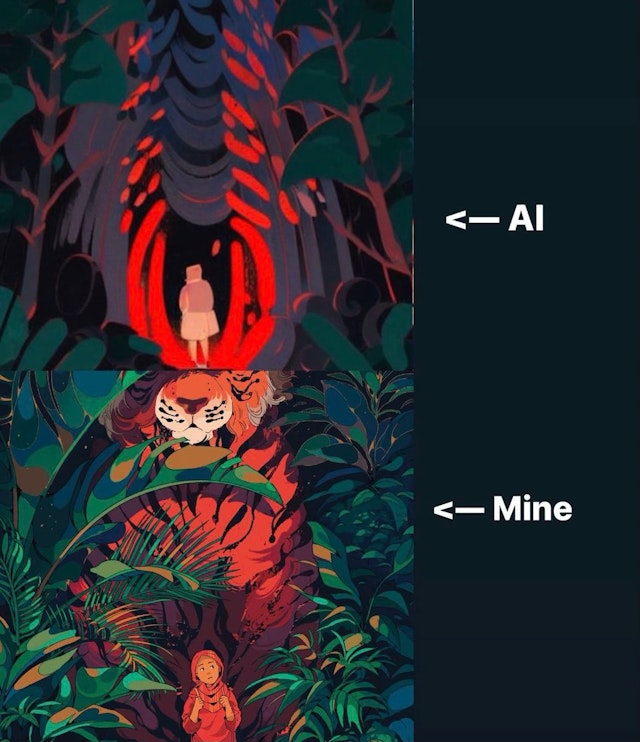

To illustrate the similarities between their artwork and that of the AI model, Lee provided The Drum with the following images:

To Lee, the experience of having their artwork poached from the internet and recycled by an AI model was an affront to the passion and craft they had devoted much of their life to. “It's really sad. When you’re an illustrator or an artist, forming your craft and your voice is a lifetime feat, a lifetime work in progress. You never stop … When you’re illustrating, you’re using everything that your background gives you … Your inspirations for the way you draw could be your childhood, books, your past trauma. So when an AI just takes that and just spits it out, it’s just like, ’are you serious, dude?’ Like, AI doesn’t have trauma.”

Advertisement

Lee’s artwork is striking in its use of vibrant color. In many of their pieces, DayGlo hues pop off the page, filling the viewer with a sudden, energetic buzz. Seeing their work digested by a generative AI model temporarily took the color out of the vision that Lee held for their career. “I was just really sad. For a while I thought, ’what’s the point of trying?’”

Lee isn’t alone in their critical stance towards generative AI (“I think for humanity, it’s a bad thing,” they say). Earlier this year, three artists filed a lawsuit against Midjourney, Stability AI and DeviantArt – three companies that have launched image-generating AI models.

Potential solutions

It often takes the law a while to catch up with technological innovation. In some cases, it’s not until well after a technology becomes highly developed and interwoven with many people’s day-to-day lives that lawmakers and regulators have a chance to grasp the changes that have taken place and to respond accordingly.

This has certainly been the case with social media and big tech, as evidenced most recently by TikTok CEO Shou Zi Chew’s congressional hearing. And at this point, it appears likely that a similar pattern will play out around generative AI.

“Artists are generally protected against straightforward copying by the existing legal framework, whether it is done by a machine or a human,” says Matthijs Branderhorst, an attorney who specializes in, among other areas, technological patents. “The uncharted territory we [currently] find ourselves in with generative AI is that the original work from artists is used to train AI algorithms.”

Suggested newsletters for you

Some believe that a potential solution can be found in blockchains – immutable digital ledgers that ensure full transparency for all parties involved in a particular transaction. Through the use of blockchains, so this line of thinking goes, artists can secure smart contracts – legally binding blockchain-based contracts that automatically go into effect as soon as certain predetermined conditions have been met – to ensure that they are compensated or at least notified whenever another artist (human or machine) borrows their work.

“The way blockchain works is you literally can’t post or use an image if it has a smart contract and you haven’t complied with [the terms of that smart contract],” says Mark Long, author and CEO of gaming company Neon. “The contract goes wherever the image goes. I think it returns power to the creators … if we could put these license agreements in place, then the revenue goes directly to the creator.”

The specifications of smart contracts, Long adds, can be tailored by individual artists: “Maybe the artist never wants anybody to be able to use it, or they want them to be able to look at it but nothing else – they can set that in their smart contract.”

Sounds simple enough. But how might the use of smart contracts be integrated into broader copyright laws, which vary from region to region? Put another way, is it possible that if more and more artists were to begin using smart contracts to protect their work from generative AI models, those practices could be subsumed within existing legal frameworks?

Branderhorst, the patent attorney, has his doubts about such a possibility: “The decentralized character of the blockchain and the distribution of nodes across many different jurisdictions would [be] difficult to align with the territorial nature of copyright law.”

Another solution – one that Lee, the Brooklyn-based illustrator, is currently using – is a technology called Glaze that has been developed by a team of researchers from the University of Chicago. As the name might suggest, Glaze adds a subtle layer of distortion to works of art, thereby making it impossible for generative AI models to identify and mimic the characteristic elements of a particular artist’s style. It’s like putting on aviator sunglasses in front of a retina-scanning device; it’s just a thin layer of obfuscation, but it’s enough to render the device obsolete.

Why should all of this matter to marketers?

These issues pose a new dilemma to marketers: for one thing, it’s a radically transformative technology, capable of potentially saving huge amounts of time, energy and resources; at the same time, it poses an existential risk to the livelihoods of many artists.

How, then, should brands proceed into this brave new (AI-generated) world?

For one thing, it seems clear that any brand that works with human artists – an illustrator like Lee, for example – should engage those artists in a conversation before launching any campaign that would leverage AI-generated art. As this technology proliferates, so too will awareness among artists about its potentially harmful impacts. An incautiously-launched generative AI campaign runs the risk of alienating a brand’s (human) artist collaborators.

“As a guy that uses art [in his work], I’m sympathetic to artists and I want to make sure they get compensated, so I’m going to pick [generative AI platforms] on which I know the artists are protected,” says Neon‘s Long.

Additionally, as the lawsuit mentioned above should make clear, generative AI raises some legal – in addition to ethical – concerns. Any marketer who is considering integrating generative AI in a campaign would be well-advised to pay close attention to the laws that are likely to grow and evolve around this new technology – including, perhaps, laws intended to protect artists’ intellectual property.

“It will be some time before the legal landscape has settled [and] more lawsuits are likely to follow,” says Branderhorst. “But hopefully a balance will be found where both sides [the companies building generative AI models and human artists] win – and not just the lawyers.”

For more on the latest happenings in AI, web3 and other cutting-edge technologies, check out The Drum’s latest Deep Dive – AI to Web3: the Tech Takeover. And don’t forget to sign up for The Emerging Tech Briefing newsletter.