AI or BS? How to tell if a marketing tool really uses artificial intelligence

Amid the giddy excitement about artificial intelligence, chronicled in our latest deep dive, columnist Sam Scott cautions us to look twice at some of the big claims about how AI is supposedly remaking marketing.

/ Photo by Michael Dziedzic on Unsplash

Advertisers and publicists know that they can use the phrase “artificial intelligence” to describe software and that almost everyone will repeat it without giving it a second thought. Well, let’s give it a second thought.

A common way to seem trendy is to use a new buzzword to refer to something that already exists. The “cloud” is usually just using someone else’s IT infrastructure. The “blockchain” is just a digital ledger. An “NFT” is just an online graphic design accompanied by the ludicrous proposition that someone can pay to own it.

Advertisement

One of the most recent buzzwords, of course, is the “metaverse.” But it is simply the virtual reality platform of Meta, the company formerly known as Facebook. And VR is nothing new – look up “AI Winter.” From stereoscopic images in 1838 to Second Life in 2003, VR keeps trying to come back, but it is never successful.

Last week, a New York magazine feature by Paul Murray proclaimed the metaverse a “deserted fantasyland.” At the same time, Meta’s commerce and fintech lead, Stephane Kasriel, announced on Twitter that the company would be “winding down digital collectibles (NFTs).”

Mark Zuckerberg himself told his employees last week that now “our single largest investment is in advancing AI and building it into every one of our products. We have the infrastructure to do this at unprecedented scale, and I think the experiences it enables will be amazing.”

And what about all those marketing consultants and influencers who breathlessly proclaimed that the metaverse and NFTs were the future and that all companies should immediately use them or perish?

That sound you heard last week was them switching their Twitter and LinkedIn profiles from proclaiming their expertise in the metaverse and NFTs to artificial intelligence. Even though they have likely never taken a single university course in the subject.

Several marketing software companies have done similar pivots and recently released tools that purport to use AI. But is that true – or are they just using that newly popular buzzword? For this column, I contacted Folloze, HubSpot, Intercom, Prequel and Propel to see. More on their answers below.

What is AI in general?

First, AI is not some superintelligence. Basically, it is a computer program that “learns” and “improves itself” over time by detecting patterns in sets of information and calculating probabilities.

Take the text outputs of the trendy ChatGPT tool. Last month, Stephen Wolfram, the British pioneer in the development and application of computational thinking, explained how it works in a post on his personal website’s blog.

“What ChatGPT is always fundamentally trying to do is to produce a ‘reasonable continuation’ of whatever text it’s got so far, where by ‘reasonable’ we mean ‘what one might expect someone to write after seeing what people have written on billions of web pages,” he wrote.

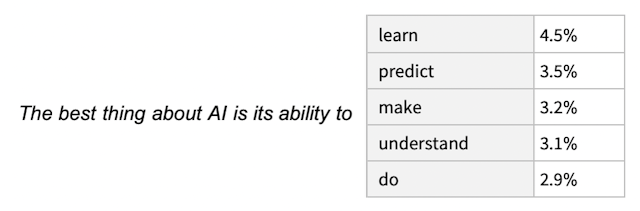

Put simply, ChatGPT takes an initial prompt and determines – on an individual, word by word basis – what most often comes next based on the existing texts that it has scanned throughout the internet. In Wolfram’s words, “it’s just adding one word at a time” – but doing it so quickly that it seems as though a robot is writing an original, whole block of text.

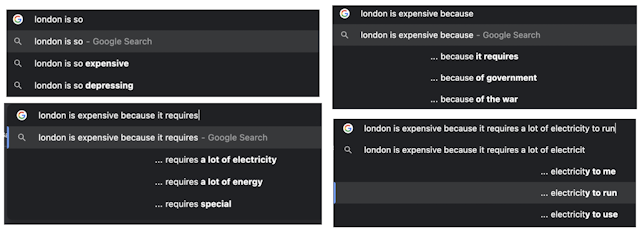

Essentially, ChatGPT is a gigantic version of Google autocomplete. Here is an example that I did based on what Google suggested as the most common words based on the search for “London is so.”

Here is what my imitation of ChatGPT wrote: “London is expensive because it requires a lot of electricity to run.” (I have not lived there since 2001, when I worked as a bartender and could get a pint of Foster’s in South Kensington for £2.50. So I have no idea if that statement is true.)

What is artificial intelligence on a technical level?

Like “metaverse” and “NFT,” I think that “artificial intelligence” is just a new buzzword for something mundane – in this instance, pattern-recognition software that sees the patterns in whatever trains it and then just repeats those patterns. To me, ChatGPT just sees what words most commonly follow other words and then uses them as well. It just does it very quickly.

But I will be the first to say that I am not an expert. Is my definition of AI accurate?

“I always describe it as ‘matrix multiplication with gradient descent,’ but your ‘pattern recognition’ definition is pretty good,” British technology entrepreneur Dr Ewan Kirk told me in an interview for this column.

Dr Kirk is the chair of Cambridge-based accelerator DeepTech Labs, founder of Cantab Capital Partners, chair of the Isaac Newton Institute for Mathematical Sciences and non-executive director of BAE Systems.

He added: “The one thing it doesn’t capture is its probabilistic nature. It doesn’t quite ‘repeat the patterns.’ What it does is construct the most likely pattern given the inputs. The pattern can be a digit (given a handwritten number, what’s the most likely candidate for the real value), or it can be much more complicated (given a textual prompt like ‘teddy bears programming a computer underwater,’ what’s the image that can be constructed that most closely matches the prompt).”

So, on a basic level, what exactly is AI?

“In technical and scientific terms, it’s generally ‘machine learning’, which uses various complicated and subtle statistical techniques to extract statistical relationships from data,” Dr Kirk said.

“In almost all cases, this involves running a labeled data set (such as lots of pictures of animals labeled with their type like dog, cat, meerkat) many times through an algorithm and tuning the algorithm so that when it has to guess what an image is when it’s not labeled, it gets the answer right as often as possible.”

He added: “For lay people, AI can mean anything from an AI-enabled toothbrush to HAL 9000.”

The FTC is concerned about fake AI claims

The average person might call many things “artificial intelligence,” but the US Federal Trade Commission does not want companies to do the same thing.

“One thing is for sure: [AI is] a marketing term. Right now, it’s a hot one,” it said in a blog post last month. “And at the FTC, one thing we know about hot marketing terms is that some advertisers won’t be able to stop themselves from overusing and abusing them.”

One of the FTC’s concerns? Whether companies are making fake claims that they use artificial intelligence. According to a 2019 report from London venture capital firm MMC, for example, 40% of European “AI” startups that are classified as AI companies do not actually use AI in a material way.

“If you think you can get away with baseless claims that your product is AI-enabled, think again,” the FTC wrote. “In an investigation, FTC technologists and others can look under the hood and analyze other materials to see if what’s inside matches up with your claims. Before labeling your product as AI-powered, note also that merely using an AI tool in the development process is not the same as a product having AI in it.”

The FTC added: “You don’t need a machine to predict what the FTC might do when those claims are unsupported.”

How to tell if a product actually uses AI

How would Dr Kirk see if a company might be fabricating its AI claims?

“The top sign is that the company doesn’t have a background in data science, and they suddenly start talking about AI,” he said.

“Another sign is that when the company talks about AI, there’s no real detail about the benefits of whatever AI they are pushing. If there is somebody selling an AI-enabled toothbrush, ask yourself, ‘Why do I need an AI-enabled toothbrush? What tangible benefits will it give me over my old-fashioned manual toothbrush? Could this ‘sprinkling of magic AI fairy dust’ just be marketing?’ It often is.”

Basically, when a company is selling a marketing tool that purports to use artificial intelligence, marketers should see if the organization has an active data science team. If not, they might have just slapped the term “AI” on a product for the buzzword effect – perhaps just like this Lynx deodorant that m marketing consultant and commentator Tom Goodwin recently saw and tweeted.

Other things to look for

Arif Janmohamed, a partner at Lightspeed Venture Partners, wrote in Fortune magazine in 2018 that “AI incorrectly has become a catchall phrase for anything that has to do with data or workflow. They also tend to liberally throw around 'algorithm,' a word often associated with AI. But just because a system has algorithms that drive certain outcomes doesn’t necessarily mean it is AI.”

In response, Janmohamed suggested that people ask these questions: do the people claiming to be AI experts have experience taking on huge AI challenges, to the point that they have an extreme advantage over competitors? Do they understand the intricate technical details of what it takes to build an autonomous system? Are they attracting the talent to attack the market?

Writing in The Enterprisers Project, a community for chief information officers and IT leaders, in 2020, Stephanie Overby described these signs of “AI washing”: the product requires minimal data for training, the "AI" needs business rules in order to work, there is a notable lack of case studies, the solution does not get better over time, the staff is light on AI talent, and there's no clarity about how the AI works.

The best question to ask

Several martech companies have recently released new tools that purport to use artificial intelligence. But do they really do so?

Folloze has an AI content recommendation and buyer insight engine. HubSpot has a Chatspot and Content Assistant. Intercom has three features: a composer AI, conversation summarization, and article generator AI. Prequel has an Artique AI advertising and marketing graphics platform. Propel has an AMIGA AI Assistant for PR.

In 2017, Galen Gruman, executive editor of the tech publication InfoWorld, wrote that the quickest way to see if a tool or software is truly using AI is to ask whether it can learn and adjust on its own without a software update.

So, I asked spokespersons for those five martech companies Gruman’s specific question. I have put their responses in alphabetical order in full below so that readers can judge for themselves.

Folloze: “[Chief product officer and cofounder David Brutman] said yes, the model is adjusting the recommendations based on additional behavioral data captured.”

HubSpot did not respond to my request for comment on that specific question.

Intercom: “Our Inbox features powered by GPT-3.5 use it in a stateless way. We don't update the GPT-3.5 model weights. However, the context that is in the prompts to GPT-3.5 varies depending on the user context, and so is customized. Additionally, we have version 2 features that are currently in testing and will get better as there is more customer support data available. These also don't fine-tune the model however; pretty much no one is fine-tuning GPT-3.5 or GPT-4 models...these features aren't generally available yet. But the system overall will learn and adapt.”

Prequel: “I do not believe so.”

Propel: “Yes, the solution can learn and adjust on its own. Amiga utilizes the constantly updating pitch data from Propel's proprietary dataset. In addition, because Amiga leverages Open.AI's API, and because of the nature of that API, the AI is constantly learning and evolving.”

What marketers should remember

But it is not just potentially exaggerated claims of using AI that bothers me. First, it is that we are heading towards a world that will lose all creativity and become extremely boring. Basically, AI finds the most prevalent existing patterns and commonalities and then reproduces them quickly and cheaply. It cannot create anything completely new and original.

An obsession with “optimization” is already making all advertising and music sound the same. Alex Murrell, strategy director at the British brand agency Epoch, also recently published an essay entitled The Age of Average in which he shows how everything from apartment interiors to coffee houses to architecture to people to media to brands all look the same.

Now, to borrow a good line from a recent Farnam Street blog post, tools such as ChatGPT are merely creating “average writing available on demand.” And never forget what David Abbott said: “Shit that arrives at the speed of light is still shit.” Even if it is created by AI.

Second, AI will mean that what we see online will increasingly be fake.

Third, it seems that many AIs are getting trained on and using others’ intellectual, creative property without any due payment. Take AI Seinfeld, which is a Twitch stream called Nothing, Forever that debuted last month and plays supposedly AI-generated content based on the famous 1990s US sitcom. Did NBC, Jerry Seinfeld or Larry David get paid for that?

Stanford University’s Center for Research on Foundation Models announced this month that they had basically cloned ChatGPT to create Alpaca GPT for a mere $600. But isn't that what AI always does anyway? Get trained on and recreate others' intellectual property for cheap or free?

Creative technologist Paul DelSignore also recently reminded us on Medium that companies do not own anything that they create with AI tools. Patent offices will not register such material because it is created by machines, not humans.

Regardless, remember that the metaverse has just been Meta and Facebook’s individual virtual reality platform. Everyone who talked about the “metaverse” instead of “virtual reality” was doing the company a favor. The same is true when people talk about a company’s “AI” without verifying it.

This is why the marketing industry should never be cheerleaders by repeating buzzwords and accepting technical claims without having independent technical experts review those claims. Just because advertisers and publicists tell you that something is using artificial intelligence does not make it so. Just see this tongue-in-cheek Havas Tel Aviv ad for the Israeli convenience store chain AM:PM.

AI is the new metaverse is the new NFT is the new Clubhouse is the new podcast is the new personalization is the new brand purpose is the new blockchain is the new chatbots is the new growth hacking is the new agile is the new Pokemon Go is the new QR code.

Or as Dan Ariely, a professor of psychology and behavioral economics at Duke University, once put it in the context of another recent fad: “Big data is always like teenage sex: everyone talks about it, nobody really knows how to do it, everyone thinks everyone else is doing it, so everyone claims they are doing it.”

The Promotion Fix is an exclusive column for The Drum contributed by Samuel Scott, a global keynote marketing speaker and head of marketing at the IT mapping platform Faddom in Tel Aviv. His opinions are only his own.