IPG calls out Facebook & YouTube for brand-damaging and ‘inconsistent’ misinformation policies

A new report from IPG Mediabrands and Magna has delved into the scale of misinformation on social networks and where brands are most at risk. Here’s what you need to know.

The IPG Mediabrands report recommends brands reconsider their ad spend on social platforms

After a string of high-profile brand safety breaches on social platforms, brands are reappraising where to invest their advertising spend. While this has yet to move the needle on the ongoing trend towards digital ad spend increase, those incidents are redefining and formalizing the relationship between brands and the platforms on which they choose to spend.

At the same time, calls for oversight and regulation are gathering steam and the fallout from revelations from the Facebook Files have advertisers worried they’re not being fully informed about the practices and effectiveness of advertising across certain channels.

That movement has gained traction with the publication of a report by IPG Mediabrands into the scale of misinformation and disinformation across social platforms. The report makes a distinction between the practices in place at different social media outlets to eliminate “misleading content” and, crucially, advises that brands reconsider where they are spending in order to ensure they are not caught up in any brand safety concerns, helping the wider industry force changes through.

Joshua Lowcock, global chief brand safety officer at Mediabrands network agency UM Worldwide, says: “While some platforms have policies on disinformation and misinformation, they are often vague or inconsistent, opening the door to bad actors exploiting platforms in a way that causes real-world harm to society and brands.”

Maze of moderation

The report looks at how each of the main social platforms, from Facebook to Twitch, responds to potential misinformation from their users. It notes that the sheer complexity of understanding each platform’s policies is a hindrance to understanding where to spend.

For example, the report notes that Reddit delegates responsibility for ensuring there is no harmful content to volunteer moderators, even within the ‘safe’ subreddits that can carry advertising. By contrast, Facebook and YouTube each link out to third parties including Wikipedia as a ‘proactive‘ measure when the potential for misinformation arises.

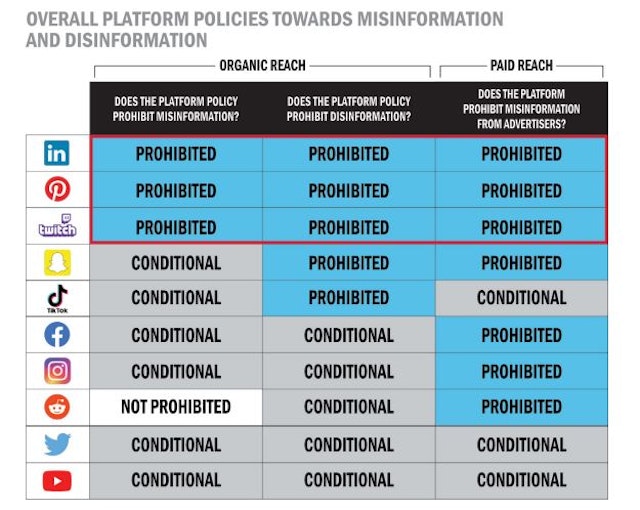

The report notes that some of the biggest players have what it terms ‘conditional’ policies regarding certain types of misinformation, which muddies the water further (see chart below).

Facebook, Instagram, YouTube and Twitter do not have strict policies on misinformation and disinformation, “often leaving a lot of gray and, subsequently, leeway for proponents of misinformation and disinformation to circumvent policies,“ according to the report.

Even on the more regulated and specific platforms, such as LinkedIn, users typically have much more leeway to post misinformation than brands and advertisers do – even though both types of content coexist in the feed.

For extra layers of confusion, some platforms including Twitch and Snapchat consider users’ off-platform activity when it comes to moderating their behavior. The varying levels of strictness, use of third parties and policies for different types of misinformation currently mean there is often confusion about what counts as ‘brand safe’ across each platform.

Damage is being done right now

As the report makes plain, the lack of effective policies around misinformation is causing problems for brands already. It specifically cites the dangers of vaccine disinformation as being a flashpoint over the past 18 months, citing research from the Global Disinformation Index that found 189 ad-funded domains serving Covid-19 misinformation.

Crucially for brands and advertisers, however, it also notes that ad networks – not just social platforms – are responsible for enabling those sites to profit from misinformation. IPG Mediabrands also suggests that it is on brands and advertisers to be proactive and ensure their ad spend is not funding those sites, rather than delegating that responsibility elsewhere.

The report notes that damage is often done to the brand rather than the platforms or networks on which they advertise, with Elijah Harris, Magna’s executive vice-president of global digital partnerships and media responsibility, saying: “Responsible brands want to ensure their messages are seen and shared alongside the right content on platforms. Consumers have also been watching this space to see how platforms adapt their moderation and enforcement techniques to curb content looking to spread false information in a coordinated way.“

Policing the platforms

Rather than a call to stop ad spend to problematic social platforms, the report stresses that the problem is industry-wide, replicated across some advertising networks as well, and that brands have a key role to play in ensuring they do not inadvertently fund disinformation. One way to do so is to lean on the platforms to improve.

Harrison Boys, the director of standards and investment product EMEA at Magna and author of the study, says: “Marketers are right to be concerned when they find their advertising near misleading content as, unchecked, it could harm their reputations and the communities they serve. The industry, which joined forces against online hate speech and supported online privacy, needs to take a stand against misinformation and disinformation today.”

He suggests that brands can do so by using organizations such as NewsGuard, which highlights ‘quality’ journalism outlets that can be supported through ad spend. It is important to note, however, that even these third-party quality checkers do not always align on what counts as a reliable and misinformation-free environment. News sites also typically do not offer the reach and scale available to brands across some social platforms, so an appraisal of the relative pros and cons is necessary.

One thing the report absolutely does make clear is that misinformation and deliberate disinformation is endemic across social platforms, and that brands that blindly advertise across them are risking significant brand damage.